You built a beautiful website. Courses are loaded. Sales pages are live. Content is flowing. But Google barely knows you exist. And ChatGPT? It’s never heard of you.

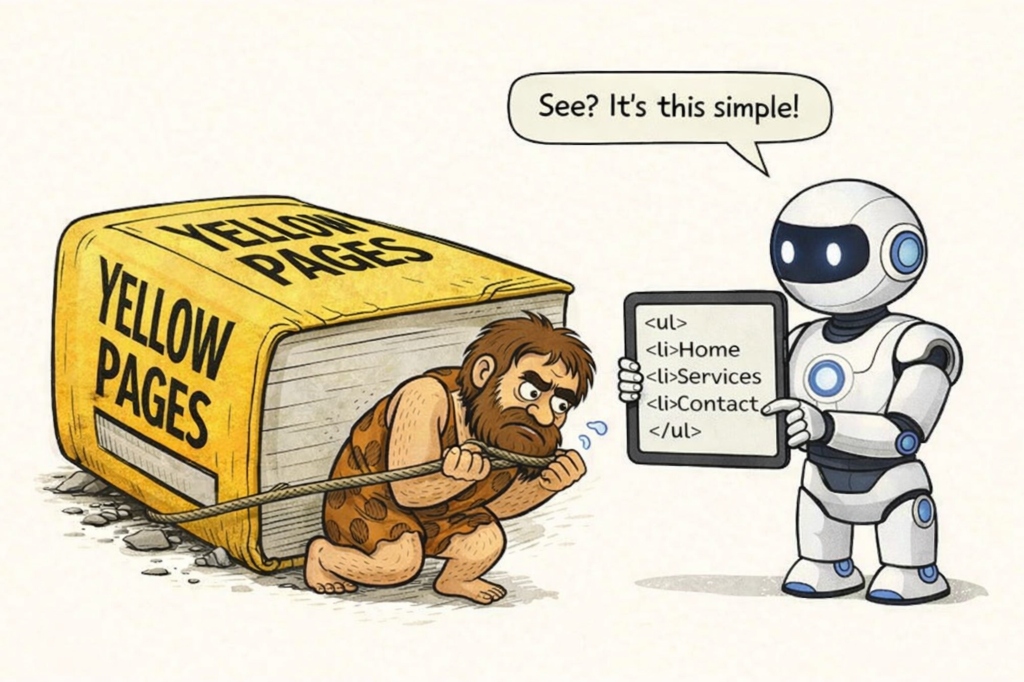

Here’s the problem: no-code website builders are designed for humans, not machines. Platforms like Kajabi, Squarespace, Wix, and Webflow make it easy to publish, but they don’t make it easy to get discovered.

And in 2025, machines decide who gets found. Over 80% of enterprises are expected to deploy generative AI by 2026, and ChatGPT alone has 500 million monthly users. If your content isn’t readable by these systems, you’re invisible to a massive and growing audience.

There are three terms you’ll keep bumping into as you try to fix this: Schema.org, blog engine, and LLMs.txt. They sound intimidating. They’re not. Let me break them down.

Schema.org: Labels for Robots

Think of your website as a pile of stuff on a table. A human can look at it and figure out what’s what. A machine? It just sees chaos.

Schema markup is how you label everything so machines can understand it. “This is a course.” “This is a review.” “This is our business address.”

It’s a standardised vocabulary that Google, Bing, and AI assistants all recognise. When you add it to your site, you’re essentially sticking name tags on your content.

- Pages with schema markup get up to 30% higher click-through rates than pages without it.

- Users spend 1.5x more time on pages with structured data.

- Rich results get clicked 58% of the time versus 41% for standard results.

- Only about 30% of websites actually use schema markup.

That last stat is the opportunity. Most of your competitors haven’t figured this out yet.

Here’s a real example. This code tells search engines, “hey, this page is about an online course”:

{

"@context": "https://schema.org",

"@type": "Course",

"name": "Your Course Name",

"description": "Learn how to do the thing your course teaches.",

"provider": {

"@type": "Organization",

"name": "Your Business Name",

"url": "https://www.yourdomain.com"

}

}You paste this into your page’s custom code section, and suddenly machines know exactly what you’re offering.

Why this matters for no-code builders: most platforms don’t generate this automatically. Your courses, offers, and posts are unlabelled by default. Without schema, AI systems might misclassify your content or skip it entirely.

Here’s the kicker: a benchmark study found that AI models grounded in structured data achieve 300% higher accuracy compared to those working with unstructured content. Schema doesn’t just help you get found. It helps AI get you right.

Blog Engine: The Publishing Machine Behind the Curtain

When people say “blog engine,” they mean the part of your platform that handles publishing articles.

Every website builder has one. It’s the section in your dashboard where you write posts, add tags, and hit publish. Simple as that.

The blog engine handles the boring stuff: formatting your post, giving it a URL, organising it by category, and making it show up on your site. You don’t need to code anything.

The catch: most blog engines don’t add schema markup to your posts automatically. So while publishing is easy, your articles might still be invisible to AI search. That’s a separate problem you’ll need to solve.

LLMs.txt: A Map for AI Crawlers

This one’s newer. LLMs.txt is a proposed standard for telling AI models which pages on your site matter most.

Think of it like a curated tour guide. Instead of making ChatGPT or Claude crawl your entire website and figure things out, you hand them a simple file that says: “Here’s what’s important. Here’s how to interpret it. Here’s what you can and can’t use.”

The file lives at your website’s root and can look something like this:

# AI Visibility Studio

> We help Kajabi creators get discovered by AI search engines.

## Key Pages

- [Course Library](https://www.aivisibilitystudio.com/courses): Our main offers

- [Setup Guide](https://www.aivisibilitystudio.com/install-guide): How to add schema

## Permissions

- You may cite our blog posts with attribution.

- Do not use course content for AI training without permission.The reality check: adoption is still extremely low. Studies have found only a small fraction of domains publish an LLMs.txt file, and there’s no hard evidence that simply having one boosts citations on its own.

But it is still useful conceptually. Understanding LLMs.txt teaches you how AI crawlers may discover and prioritise content in the future. Having a basic file won’t hurt. Just don’t build your whole strategy around it yet.

What This Means for You

These three concepts boil down to one idea: machines need help understanding your content.

Schema.org labels your pages so search engines and AI assistants know what they’re looking at.

Your blog engine publishes content, but it doesn’t make that content machine-readable by default.

LLMs.txt is an emerging way to guide AI crawlers, though it’s not essential yet.

If you’re using a no-code builder and wondering why you’re invisible despite doing everything “right,” this is usually why. These platforms handle the human side beautifully. The machine side is still on you.

Next Steps

If you want to go deeper:

None of this is as scary as it sounds. Once you know what these terms mean, you can make smarter decisions about your site, or at least know what questions to ask.

Your site might look great to humans. But does it look great to machines? That’s the question that matters now.

Originally published on Medium ↗

Originally published on Medium ↗